Next-Generation Computing Power integrates heterogeneous performance, quantum accelerators, and neuromorphic memory to enable adaptable, probabilistic problem solving at scale. It harmonizes edge inference, stochastic optimization, and real-time analytics to align diverse power profiles with streaming data. The resulting landscape emphasizes latency, portability, and disciplined governance. While promising, the practical implications for architecture, tooling, and governance remain nuanced, inviting closer scrutiny of how these elements converge in real-world environments.

The Evolution: From Classical to Next-Gen Computing Power

The evolution from classical to next-generation computing power marks a shift from deterministic, single-issue performance to scalable, heterogeneous capabilities that better align with contemporary workloads. This trajectory integrates quantum accelerators for specialized tasks, neuromorphic memory for adaptive state retention, stochastic optimization for probabilistic problem solving, and edge inference enabling decentralized decision-making, while preserving rigorous methodological clarity and freedom-driven curiosity.

How Next-Gen Power Drives Real-Time Insights

Real-time insights emerge from the alignment of heterogeneous computing power with data streams and decision cycles, enabling immediate interpretation, filtering, and action.

The analysis emphasizes selectivity, latency reduction, and principled prioritization, with governance surrounding data privacy.

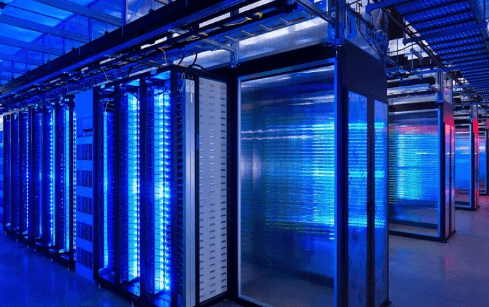

Hardware-Software Synergy: Architectures and Tools

Hardware-software synergy hinges on co-designed architectures and toolchains that align computation, memory, and I/O to workload characteristics; this alignment enables efficient partitioning, latency bounds, and predictable performance across heterogeneous substrates.

The approach highlights edge cases and latency optimization, guiding hardware catalysts toward versatile, quantum ready development.

Analytical evaluation emphasizes coherent interfaces, portability, and disciplined optimization under freedom-oriented engineering constraints.

Practical Impacts Across Industries and Use Cases

How do emerging computational capabilities reshape industry workflows and decision-making across domains? They enable targeted automation, real-time analytics, and adaptive optimization, transforming processes while preserving autonomy.

Across sectors, practical impacts include accelerated prototyping, data-driven governance, and resilient operations.

Edge uptime becomes a differentiator for latency-sensitive tasks; cost structure shifts toward variable, mission-critical investments.

Clarity emerges from measurable outcomes, disciplined governance, and disciplined experimentation.

See also: newsraze

Frequently Asked Questions

What Defines Next-Generation Computing Power Compared to Today?

Next-gen computing power is defined by substantial efficiency gains, architectural innovations, and scalable parallelism enabling faster problem-solving. It emphasizes energy-aware designs, novel hardware-software co-design, and the ability to handle larger datasets while preserving user autonomy.

How Does Real-Time Insight Differ From Traditional Analytics?

A lighthouse beam pierces fog: real time insight outpaces traditional analytics by delivering instantaneous signals rather than retrospective summaries. Real time insight enables timely decisions; traditional analytics, though thorough, lags, anchoring actions to historical patterns.

What Are Key Hardware-Software Collaboration Patterns?

Key hardware-software collaboration patterns emphasize modular interfaces, data locality, and adaptive orchestration; edge case handling and governance impose strict policies, ensuring reliability while enabling experimentation. Structures favor decoupled components, observability, reproducibility, and scalable performance across heterogeneous environments.

Which Industries Benefit Most From Next-Gen Computing?

Industries closest to data intensity—finance, healthcare, manufacturing, and telecommunications—see the strongest industry impact, while others pursue foundational investments to improve sector readiness. The analysis emphasizes measurable outcomes, rigorous prioritization, and freedom to adapt strategies across domains.

What Are Practical Cost Considerations for Adoption?

Adoption costs vary: a 20% higher initial capex often yields longer-term savings. Cost considerations include hardware, software, and talent. Implementation timelines hinge on data migration and interoperability, with phased rollouts easing risk and aligning strategic freedom.

Conclusion

The article closes as a lighthouse in a shifting sea: beacon, not bridge, guiding choices without steering hearts. Symbolically, next-gen computing power stands as a chameleon compass, translating noisy data into focused direction while remaining true to governance and latency constraints. The synthesis of heterogeneous accelerators, edge inference, and probabilistic optimization marks not a destination but a disciplined ritual—an instrument for disciplined decision-making that neatly aligns technology’s promise with real-world imperatives.